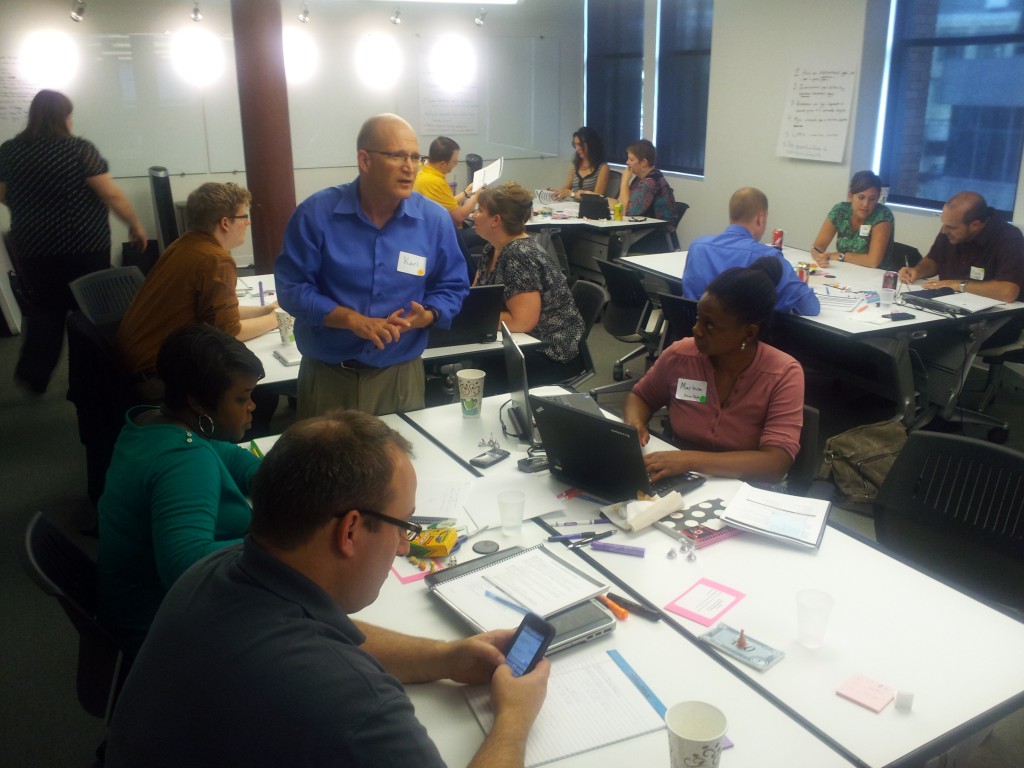

Another successful Learning Game Design workshop is in the books. Sharon Boller and Karl Kapp gave their first joint session in May of 2013 at ASTD International in Dallas… and the gaming goodness continued in Indianapolis on August 28th. We had a full room, with many participants coming from the Indianapolis area. Some out-of-town guests joined the party as well.

I assisted Sharon and Karl at this workshop at ASTD ICE, but this was the first time I actually got to experience it as a participant. It was an absolute luxury to spend an entire day playing games, learning about various game mechanics and game elements, and prototyping a game with a small team.

ExactTarget was our gracious host for the workshop. They have a great office space, and even provided participants with a midday ice cream break!

Flow of the day

Once initial introductions were out of the way, we spent some time going over Core Goals, Dynamics and Elements found in learning games. It was a nice way to gain some quick exposure to the terminology… without the workshop turning into a lecture.

Our terminology review only lasted about 20 minutes, and then we were turned loose to, you guessed it, play games! This was a workshop highlight, and helped “break the ice” among participants. Sharon and Karl carefully selected games, one competitive and one collaborative, that showcased the various game elements and dynamics we were discussing.

Our play session led nicely into a short discussion on best practices to follow when designing games. I found it easier to conceptualize these after just playing two games, noting mechanics I liked and disliked.

After lunch, we were split into teams for a fun review exercise… using Knowledge Guru®! Sharon took all of the content on game mechanics, elements and dynamics (lots of this can be found in the Learning Game Design blog series) and use it to create a guru game called Game Design Guru. Teams competed against eachother for 10 or 15 minutes, trying to get the high score. The spaced learning and repetition built into the game engine, and into the workshop itself, really helped reinforce the new terminology I had learned.

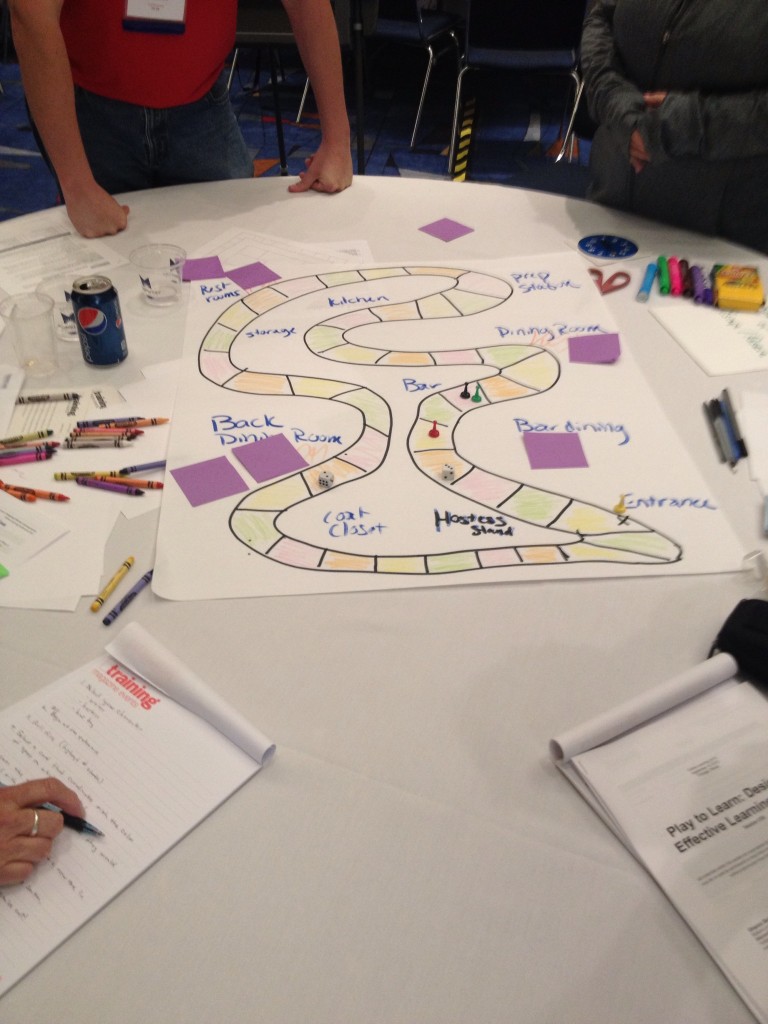

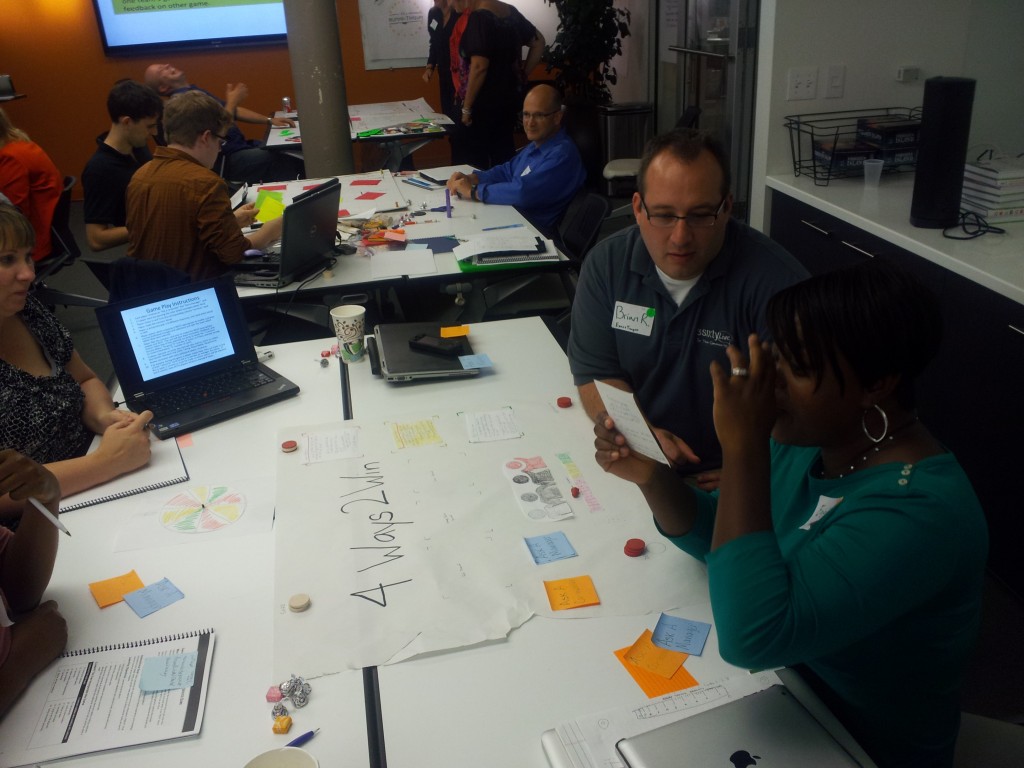

After our game, we broke into teams and spent the rest of the afternoon prototyping a game of our own. I was on a team with a fellow BLPer, Corey Callahan, and one of our clients. The client is currently developing ideas for a game she can use at her company, so we were able to use what we learned throughout the day to make a prototype she can (hopefully) actually use.

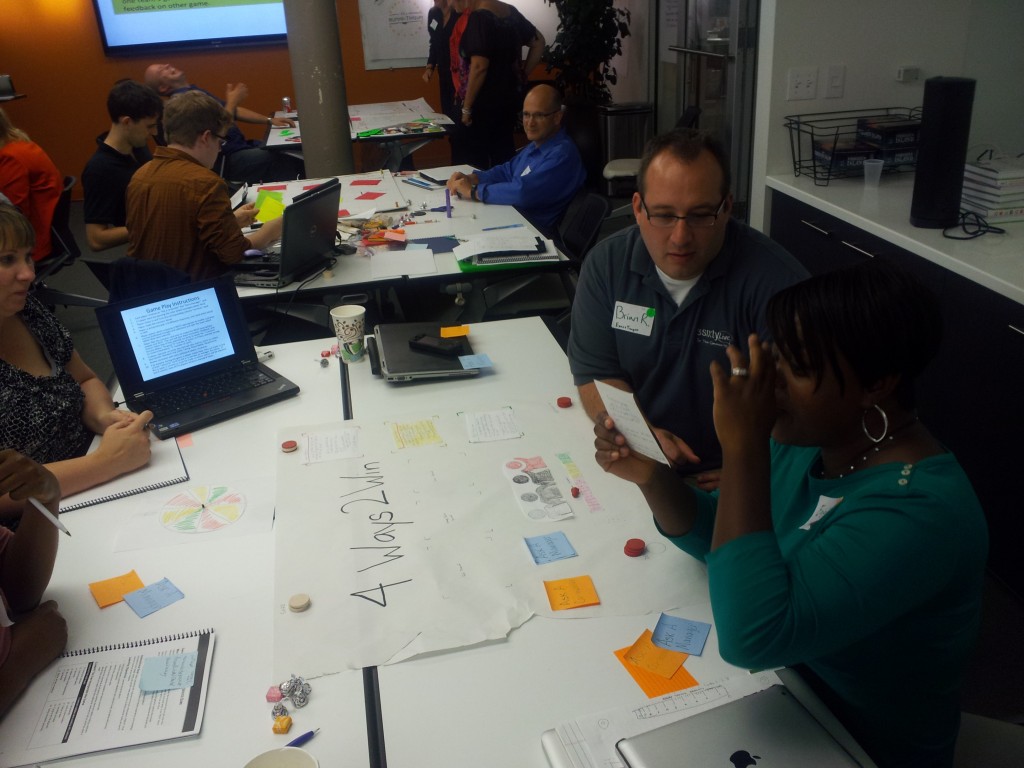

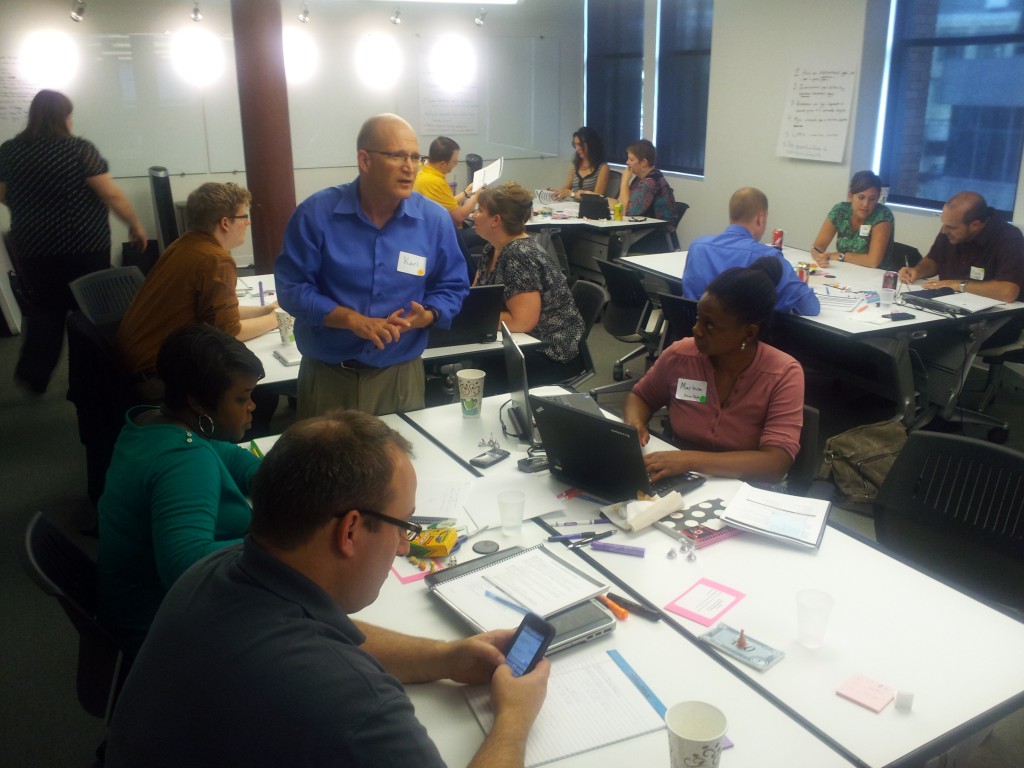

Once teams created their prototypes, we rotated to another table and playtested. The feedback the game designers received was just as valuable as the content Sharon and Karl taught earlier on; it’s only through trying to make a great game, getting feedback, and improving that we actually get better as game designers.

Karl summed it all up best towards the end of the day, when he said learning game design really comes down to “knowing what rules to break.” After a day of learning best practices and trying out our new skills, I left the workshop ready to produce my next learning game prototype… and make it better.

Games to Play and Evaluate

Sharon has compiled a list of games you can evaluate, including some game mechanics and elements to look out for as you do.

See the list here

Game Design Guru

You can play Game Design Guru to learn basic learning game design terminology.

Play here

Prototypes

Here is an example of a paper prototype created by participants at the Play to Learn workshop.

Attend a Workshop

Want to learn more about this workshop? Interested in attending in the future? Two more sessions are coming up this year: one in Chicago and another in Las Vegas. Sharon and Karl can also deliver a private workshop just for your organization.

Click here to learn more and see upcoming dates.

The Chicago workshop is a shorter, 2.5 hour version of this workshop.